By Conor Griffin, Joslyn Barnhart & Owen Larter

In 1971, the marine archeologist Honor Frost heard news of wood protruding from the sea floor. Off the western coast of Sicily, she and her team donned scuba gear, and splashed into the shallow coastal waters. Wind whipped the surface, causing the underwater sand to swirl confusingly. But even in murk, they couldn’t miss it.

“A large timber (such as I had never seen before) emerged,” she recalled, “like the head of a primeval animal crowned with weed; the presence of a buried wreck was evident.”

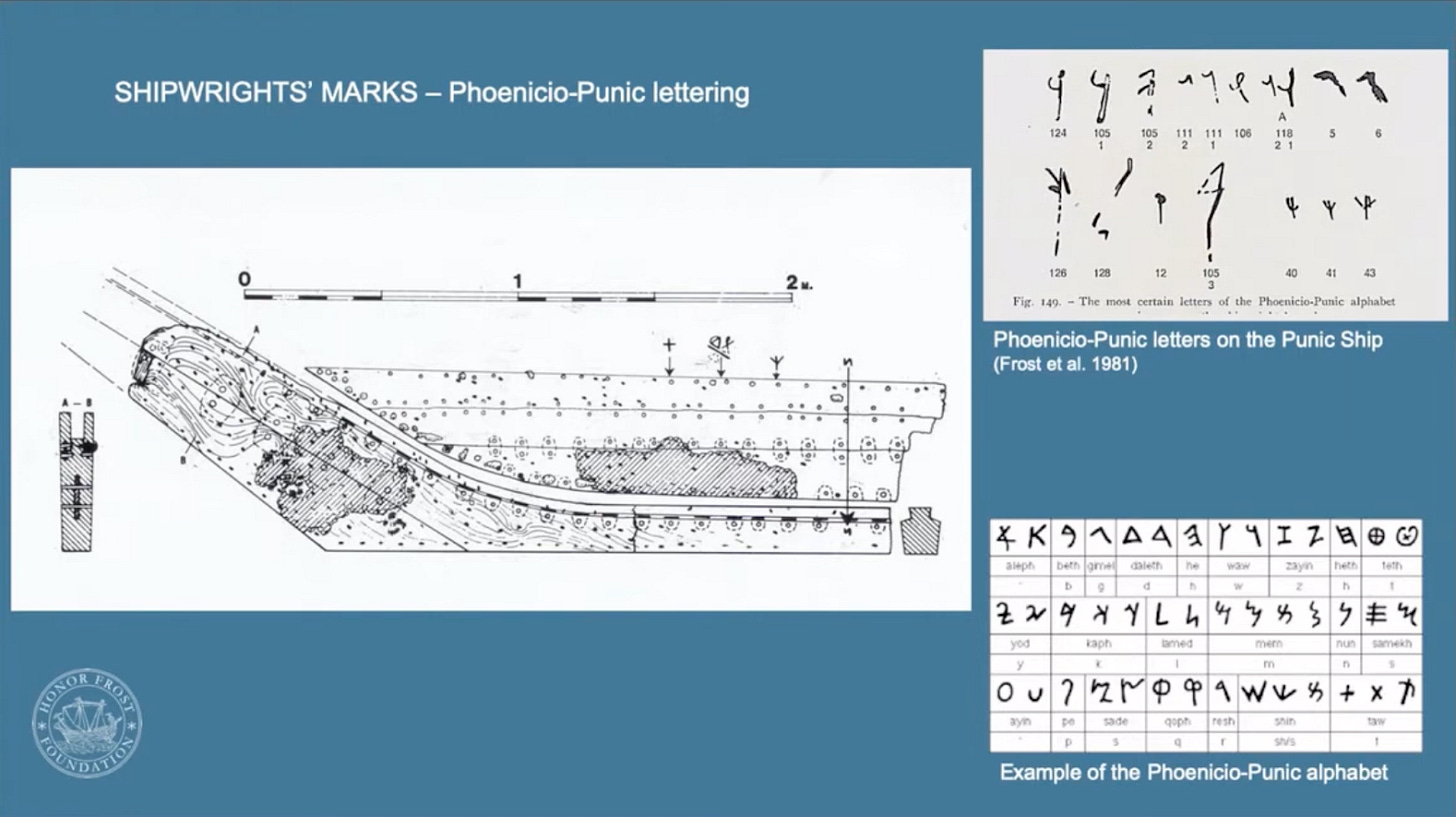

They excavated for months, gradually exposing the remains of a Carthaginian warship sunk more than 2,000 years before. Somehow, saltwater hadn’t eaten away letters painted on the wreckage, revealing a humble system that links antiquity to tomorrow.

Those shipwrights’ marks told workers in ancient Carthage how to put together a vessel—akin to flat-pack furniture from IKEA, with numbered and lettered pieces. They were among the earliest surviving examples of a simple but potent tool in human progress: the technological standard.

Many of history’s grand projects have benefited from standards, from Egypt’s pyramids, to Europe’s cathedrals, to Gutenberg’s press, to everyone’s Internet. You can even thank standards for the development of beer.

Underpinning technological standards is a plain truth: people thrive when able to cooperate, not when we must keep negotiating the basics, whether it’s a matter of nuclear safety, or a phone-charger cord, or who goes next at the intersection. So, the goal is order. And the benefits are that innovators can proceed without excessive obstacles, while everyone else is treated fairly and kept safe.

But what should standards mean for artificial intelligence? In particular, how can they guide the most advanced large language models and AI agents that could transform society?

Venture around the AI frontier today, and you’ll find ambition to accelerate AI for economic growth and transformative science alongside concern that AI could clatter into what humans cherish most. What few dispute is this: standards will help set the path.

Standards have critics too. One criticism is that companies dominate the process, prioritizing their own products or miming security without truly ensuring it. Besides this, standards can stir geopolitical tensions, as when Western countries fear China’s influence in laying the path to tomorrow, while smaller nations worry that standards may be set without considering them at all.

So, as we’ll keep insisting, standards matter! Only, there’s a problem.

For some, the mere mention of “standards” prompts slumber. And even those determined to stay awake may find themselves puzzled, gazing at the alphabet soup of standards organizations and committee meetings.

Part of the problem is that standards are often technical, such as efforts to standardize the protocols needed for AI agents to communicate. Or they are bureaucratic, negotiated out of public view, with dense, jargon-filled documents that are often behind a paywall.

Complicating matters even more, artificial intelligence is a general-purpose technology less akin to a hammer than to electricity. This will lead to standards (plus standards initiatives that don’t take) on everything from AI agents, to AI cybersecurity, to AI content provenance, to product-specific standards for AI-as-a-medical-device, and so on. And that’s not even mentioning standards for future AI applications that nobody has yet considered.

In short, standards will be immense. Standards will be tough to comprehend. But standards will also be vastly important.

CAN SOMEONE DEFINE STANDARDS, PLEASE?

Standards are a diabolical blend: intricate, vague, and slippery.

They’re the invisible infrastructure of the modern world, according to Laurie Locascio, head of the American National Standards Institute, ANSI. She recounts hearing an official at Boeing describe the airplane itself as “thousands of standards taking flight.” Standards are “the things you don’t think about,” Locascio says. “But oh, my God, you’re so glad they’re there.”

Expressed broadly, a standard defines the how of tech, whether it’s the default product specs that allow compatibility among manufacturers, or the formally endorsed risk management processes that encourage industry to act responsibly.

As technology evolves, standards do too. A leading scholar, Ken Krechmer, once noted that standards initially defined how physical objects fit together (as with those markings on the Carthaginian longship). Over time, standards came to define the relationship between technological objects (as with internet protocols).

A standard also builds on other forms of guidance, such as norms, principles and industry best practices. Unlike norms, standards should be explicit. Unlike aspirational principles, a standard should be specific enough for performance against it to be judged. Unlike early best practices, a standard should have clear buy-in.

Developing a standard can be a protracted endeavor. In some cases, it might start in a researcher’s notebook, evolving into a product or a practice that gains traction in the marketplace. At other times, institutions set standards via years of deliberations and meticulous documents. Most often, it’s a messy back-and-forth between standards that emerge in practice and on paper. This makes standards a source of tension among companies, governments, and independent advocates, all trying to set the technological future they consider best.

Some presume that laws should be how we define permitted behavior. But high-quality legislation can struggle to keep up with the frantic speed of AI progress. And when laws are passed, they may rely on standards for implementation, as with the EU AI Act.

So how to persuade everyone to care when encountering standards, rather than just to snore or sob? How to get policy leaders to ponder the entirety of frontier-AI standards and align on where action is most needed?

Our answer is storytelling: to pluck forth tales about past standards, illustrating what this technological shaping can achieve, where it goes wrong, and how we might help cultivate standards wise enough to manage the breadth and speed of AI.

Our first stop? A battlefield of centuries ago.

A ‘STANDARD’ HISTORY

Horrors encircled the boy soldier: swords clanging under the rain, excruciating howls of the wounded, the fast-approaching bellows of men hurtling across the bog to murder him. In wet turf, he shivered from knees to chattering teeth, his mouth parched, his gaze searching for any escape.

Up there?

On a hill, a flag rippled, where his legion had marked its territory. The Old French word for that banner was “estandart”: a sign of firmness and stability, a marker of where to go next, a statement of order amid chaos. To such banners, we owe the word “standard.”

More than a few historical standards emerged from war, where disorder could mean one’s brethren murdered, while coordination could mean an empire.

~225 BCE to the dawn of mass production

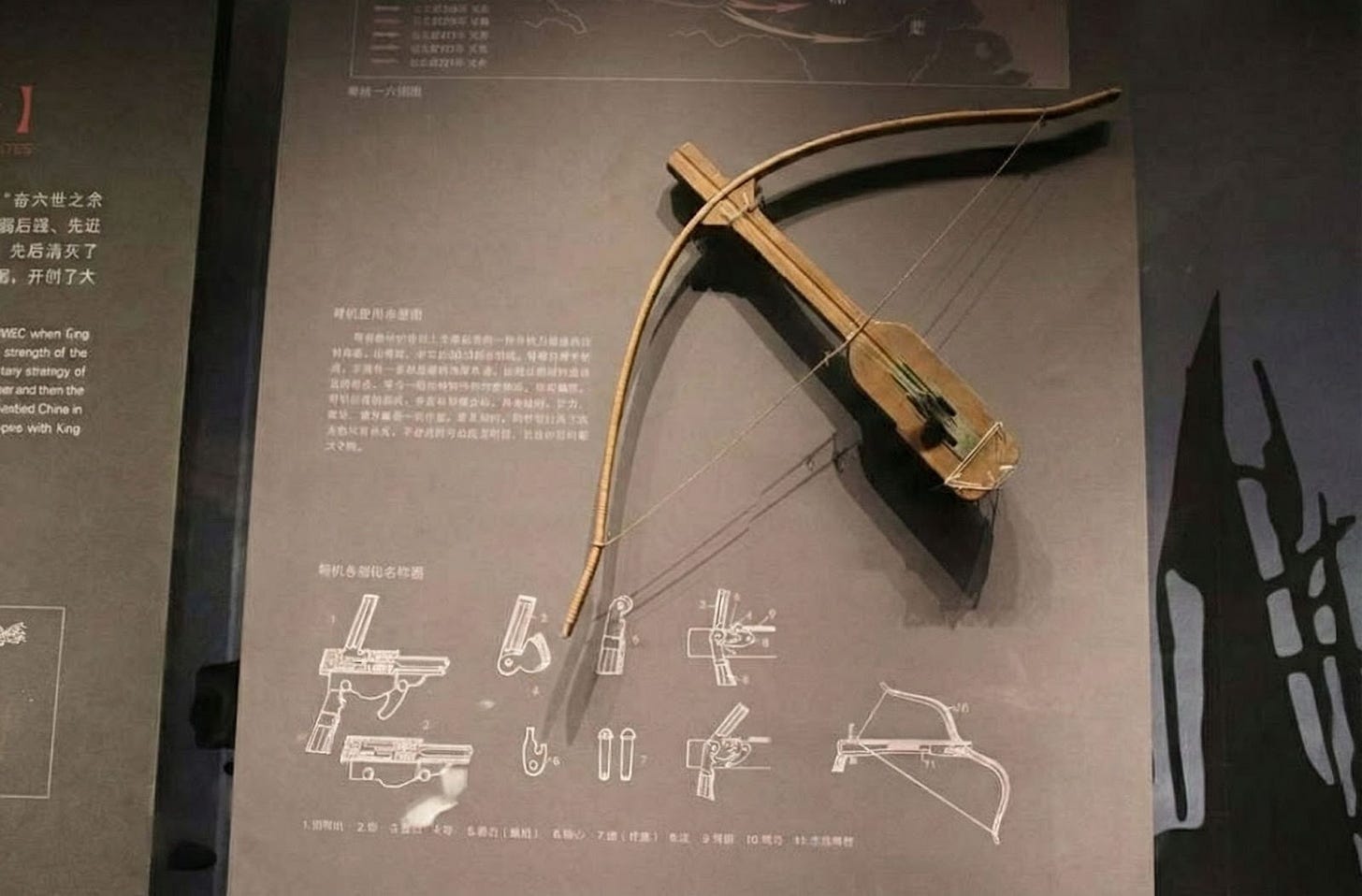

China’s first emperor, Qin Shi Huang, led an extensive standardization process that included mass-produced crossbow parts. If parts of a soldier’s weapon broke in the midst of battle, he could grab spares, and swap them in.

When Ancient Rome fought for primacy in the Mediterranean, its forces copied Carthaginian ship designs, eventually triumphing with standardized ships of their own, along with standardized tools and camp layouts, all of which simplified maintenance and large-scale coordination.

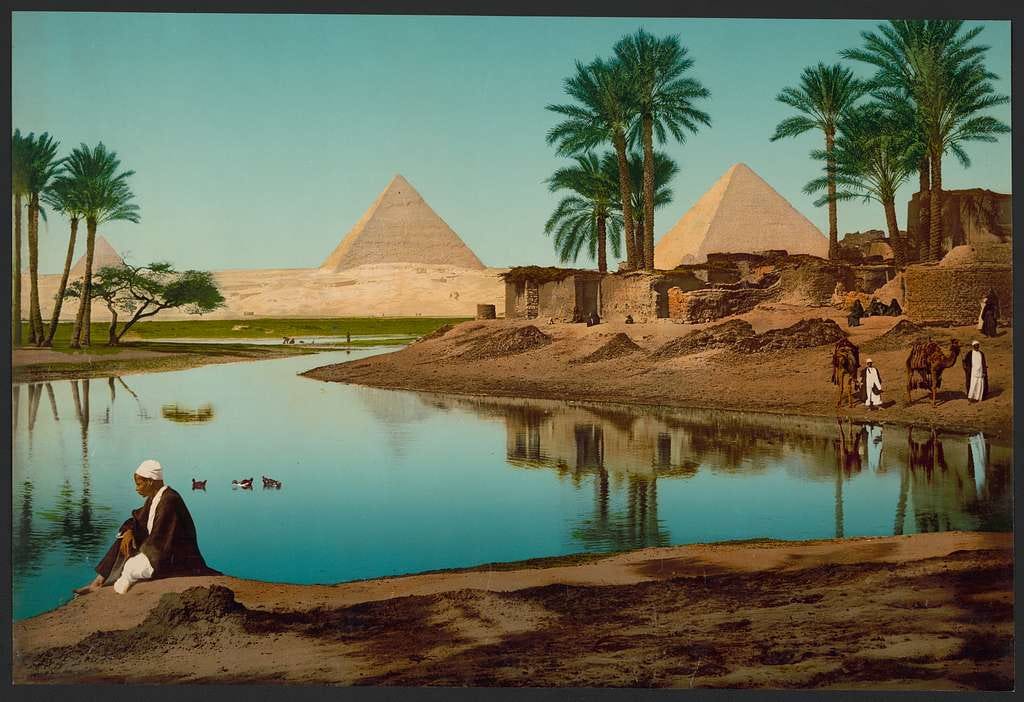

Another advance in ancient times came from standardized measurements for length, volume, and weight. Previously, cultures often had distinct units; you can imagine the squabbling. But as trade expanded, standards prevailed, making cross-cultural exchange possible. In ancient Egypt, one of the earliest and most influential standards was the cubit, a unit of length used to coordinate the building of the pyramids.

In Europe’s medieval period, guilds established standards for quality control, so that weavers might set the necessary thread count or width of cloth, preventing low-quality products from undermining a craft’s reputation. Guilds also played a protectionist role, with licensing standards imposing strict controls on who could become a member.

The consumer might benefit from standards too, with measures such as England’s Assize of Bread and Ale of 1266 establishing the acceptable quality, quantity, and price of baked goods and beer. Later, Gutenberg’s standardized press led to mass-produced books that spread ideas across the Continent.

However, technological standards reached new heights of utility during the Industrial Revolution, which set the foundations for many of today’s technologies.

1760-1840: The First Industrial Revolution — The rise of engineers

As ancient Chinese and Carthaginians had discovered long before, the Industrial Revolution’s manufacturers found that interchangeable parts offered transformative efficiency. Before, if you hand-built a musket, or a clock, or a steam engine, you might craft each screw, each bolt, each gear to fit. By contrast, interchangeability allowed for mass production, cutting costs, reducing errors, and establishing the basis for modern industry.

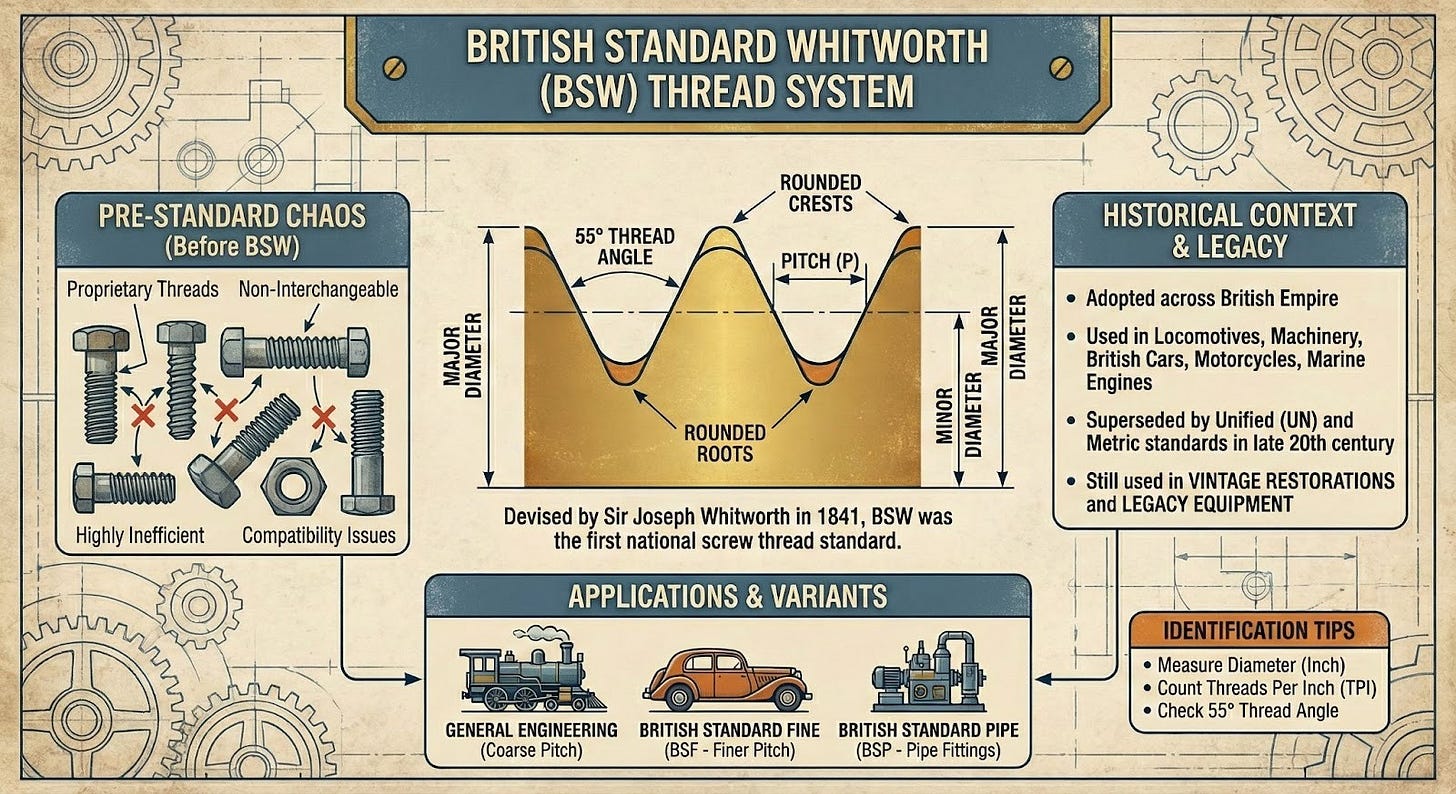

Screw threads are a classic example. Before standards, manufacturers used various designs, making repairs nightmarish. If you had one company’s bolt but another company’s nut, you were out of luck. In the 1800s, engineers built the first practical screw-cutting machines, allowing factories to produce uniform threads and a consistent system of measurement. The British Standard Whitworth became the first such standard in the world.

Screw-thread standards may not quicken your pulse. But their effects might. They played a part in British imperial ambitions, contributing to the expansion and maintenance of the British Empire through military mobilization.

1870-1914: The Second Industrial Revolution — National coordination & path dependence

The emergence of electricity, steel, and advanced machinery led to vast interconnected systems, including power grids, railways, and telegraph networks. Coordination wasn’t merely better; it was essential. To coordinate across a nation—and eventually across borders—the ambitious country needed technology standards. Two famed cases illustrate this, one successful, one bungled.

The success regards the quintessential technology of the times: railroads. By the 1870s, the U.S. rail system was a mess, with more than 20 different track gauges. When a train reached a section built to a different track-gauge width, everything—each passenger, piece of luggage, every single crate—had to be unloaded, and transferred to a new train.

By the 1880s, matters had become slightly less chaotic, with either a southern gauge or northern “standard” gauge used across most of the country. Yet this still divided national transport until, in 1886, rail companies pulled off a remarkable feat. Over two days, they converted 13,000 miles (that’s 21,000 kilometers) of southern U.S. track to the northern standard, integrating the national transportation network. When trains rolled out on June 2, 1886, they were able to travel seamlessly across the United States for the first time in history.

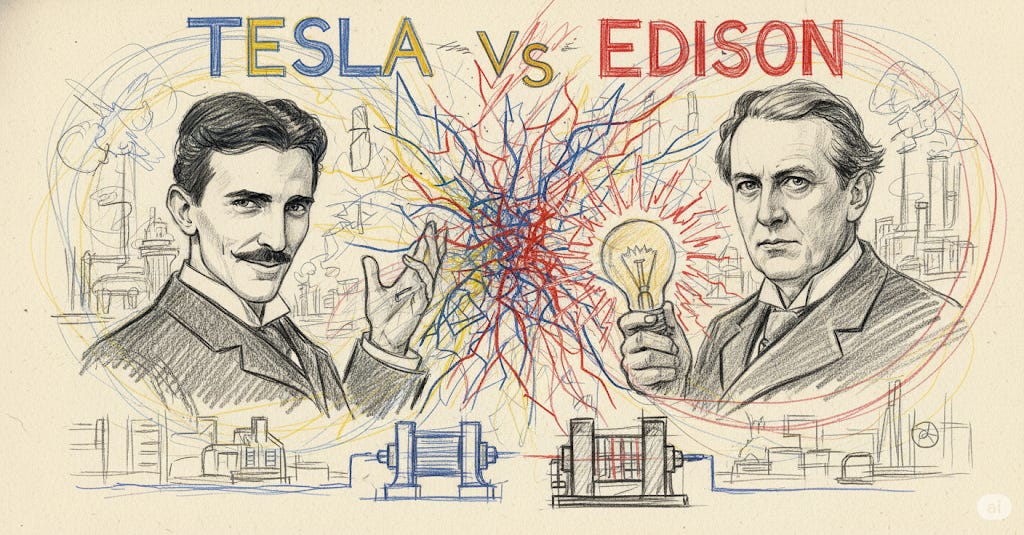

A second case illustrates bungled standards. In the 1880s, the rival inventors Thomas Edison and Nikola Tesla found themselves at the center of “the War of the Currents.” Edison championed direct current (DC), a one-directional flow of electricity that had been the early U.S. standard. Tesla, backed by entrepreneur industrialist George Westinghouse, advocated alternating current (AC), or electricity that reverses direction many times per second, and can be stepped up or down in voltage with a transformer.

From an engineering standpoint, AC had a decisive advantage: it could transmit power over long distances cheaply and efficiently, while DC could not. AC eventually won out. But by the time it had emerged as the superior solution, the world had already built electrical systems without any coordinated technical governance. As there was no international authority harmonizing electrical standards, the United States went with 120 volts at 60 hertz (a legacy of Edison’s early low-voltage DC networks). Much of the rest of the world adopted 230 volts at 50 hertz.

Once wires had been laid and appliances built, the world was locked into two incompatible systems. To this day, we’re burning out hair dryers bought in America but used in Paris, or realizing too late that we don’t have the right plug for our laptops. If it’s irksome for the average user, it’s more burdensome for manufacturers, obliging them to build different versions for different countries.

Another classic tale of path dependence is under our fingertips as we type: the QWERTY keyboard. Why does the top row spell QWERTYUIOP? One account goes like this: In the mid-to-late 1800s, early typewriters jammed each time the user struck neighboring keys in rapid succession. So, designers produced a layout that deliberately distanced many common letter pairs. Remington purchased this QWERTY design, and began mass-producing typewriters.

Before long, typing schools had trained the future secretarial workforce on QWERTY, while firms wanting fleet-fingered staff had to buy those machines. Manufacturers subsequently resolved the key-jamming problem and other keyboards tried to depose QWERTY, some claiming to quicken typing by as much as 40%. But QWERTY had become a de facto standard. (Scholars continue to debate the specifics, with some arguing that QWERTY works just fine.)

In any case, the indisputable lesson is to watch for path dependence. The standards we establish for frontier AI today—or fail to establish—may determine future efficiency or future failure.

1914-1964: Standard Development Organizations & Digital Technology

In 1918, engineering societies joined with the U.S. government to establish a standards committee that developed into ANSI, the American National Standards Institute. Today, ANSI provides the “stamp of approval” for many U.S. standards organizations, including those working on AI. In subsequent decades, standardization went global. While the United Nations was founded as a governmental venue for diplomacy, the International Organization for Standardization, ISO, emerged as a non-governmental body for peaceful technical coordination across borders. Bit by bit, additional standards bodies formed, cooking up the alphabet soup of acronyms—each a different org, subgroup, or committee—that lies before us today.

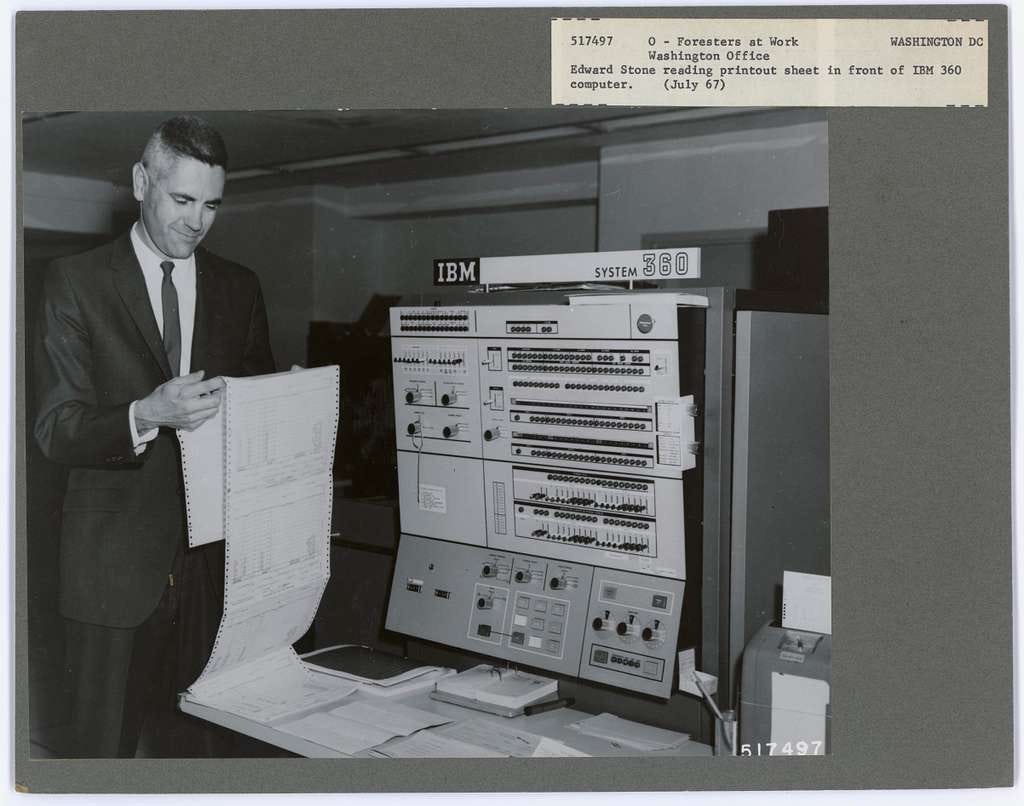

Soon, another transformation for standards was taking shape in the form of digital tech. Back then, computers filled entire rooms of universities, and each manufacturer built hardware and software within its own format. Computers could not run programs written for other systems, and accessories like printers or storage devices were incompatible.

A turning point came in 1964, with IBM’s System/360. Software on one model could more easily run on another; accessories like printers worked across IBM models. You could upgrade and expand computer systems with relative ease.

1969-today: Talking Machines

The year that hippies grooved at Woodstock and astronauts walked on the Moon, the U.S. Defense Department was testing a project that must have seemed minor by comparison: connecting research institutions and government agencies. Yet the Advanced Research Projects Agency Network, ARPANET, which sent its first message in 1969, was the precursor to our transformed world.

Before ARPANET, moving information from one computer to another was a struggle, with researchers forced to carry magnetic tapes or punched cards between locations, while those working far apart had to rely on snail-mail.

To convey information between independent systems, ARPANET adopted packet switching, breaking data into small units that could travel independently and reassemble at their destination. Extending this, Robert Kahn and Vint Cerf began designing a universal communication framework in 1973 for different types of networks to connect. Their collaboration ultimately produced TCP/IP, the Transmission Control Protocol and Internet Protocol that underpins today’s online communication.

A key effect of the TCP/IP standard was decentralization: no single authority could control the flow of data, and any network that adhered to the protocol could connect without permission from central authorities.

In 1989, a British scientist at CERN, Tim Berners-Lee, proposed another transformation that developed into a project called “WorldWideWeb,” which envisioned a global network of documents accessible through software, operating on open standards that nobody could lock it into a proprietary system. Two standards organizations, the Internet Engineering Task Force and the World Wide Web Consortium, helped to formalize the vision, crafting standards for structuring content (HTML), transferring data (HTTP), identifying resources (URI), and more.

But while standards help spread technology, this diffusion can also lead to greater harm. The expansion of railroads led to more wrecks, forcing uptake of safety standards for signaling, brakes and more. When electricity was first installed in the White House in the late 19th century, President Benjamin Harrison and his wife Caroline were so afraid of shocks that they refused to turn the lights off. Such fears—often well justified—led to the standardization of building and electrical codes. When it came to digital technology, the risks extended beyond immediate physical safety into areas like data theft. This demanded standards such as SSL/TLS to provide security for data sent over computer networks.

A recurrent challenge with frontier tech is that experts struggle to predict how exactly it will affect society. But once it is widely used, it can be sticky and hard to change. The effects of powerful technologies can also be subtle, indirect and slow-burning, for example if they change how we access and consume information. In the digital era, this has shifted technological standards from periodic safety checks of products towards ongoing processes that organizations can use to identify, evaluate and mitigate a growing suite of risks.

By way of example, the U.S. government’s National Institute of Standards and Technology, NIST, introduced the voluntary AI Risk Management Framework in 2023, building on its earlier framework for managing cybersecurity risks. Likewise, the ISO/IEC committee on AI that is considering standards on everything from red-teaming to LLM interoperability also passed the first official international AI management standard, ISO/IEC 42001, which organizations can use to demonstrate that they are responsibly integrating AI into their operations.

5 LESSONS FROM HISTORY

Studying the past, you see how often standards—by design or bumbling—have shaped the technological present. But what about our technological future?

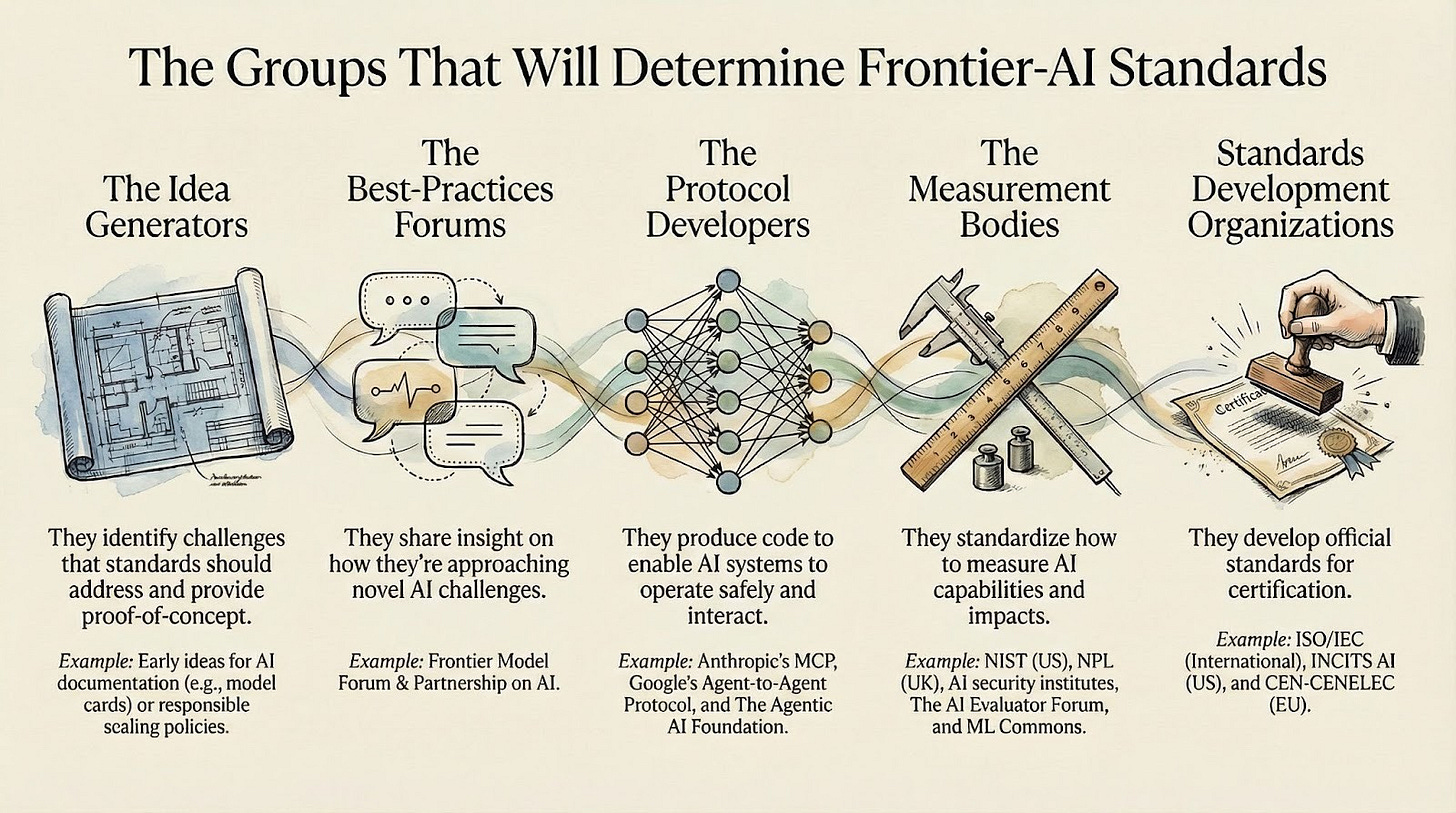

To develop good standards for general-purpose AI models and agents, we’ll need inputs from a range of groups, from scientists with know-how to institutions who can convene. Below [see infographic], we have identified five groups who’ll perform key roles.

What we mapped includes more than just official standards development organizations. We also want to capture the early spaces where standards emerge in practice before they are formalized on paper. How this works is closer to a swirl of inputs than a steady procession. Sometimes, the same organization or individual may operate in several groups at the same time. Ideas and efforts may also originate in one group, then migrate to another, with different groups offering varying degrees of speed, flexibility, expertise, and perceived neutrality.

For these groups—and the policymakers, business leaders, and advocates who shape their work—what lessons can history teach about our AI future? Here are five:

Standards matter! At best, technological standards chart a wise path; at worst, they fill the path with potholes. Consider the bulky electrical converters that one still needs when traveling—it didn’t have to be that way. On the other hand, when we get it right, the benefits of technology spread faster, more inclusively, and more securely.

The standards process needs to speed up. ISO says that the average time to develop one of its standards is three years, and ISO is not an outlier. Given the pace of change in AI, we need to speed up. For priority goals, like finding secure ways for agents to operate and interact, which the US Center for AI Standards and Innovation is working on, we need to find ways to accelerate that don’t jeopardize the overall quality and integrity of the process. This may mean looking across the many groups now focusing on AI standards and finding ways to collaborate early, rather than duplicate. It may mean focusing more on technical protocols and specifications documents that are quicker to develop. It may also mean using AI to help deliberate on and write standards, and moving to more nimble digital formats that are easier to update and use.

We need more efficient ways to input on standards. All standards, from those underpinning steam engines to the Internet, had to chart a unified path through diverging viewpoints, with an end result that did not please everyone. For AI, the challenge will be far greater. It is more akin to 1,000 technologies, and will affect different groups in different ways. This means that any broad directive— say, to “develop standards that make AI fair”—risks an everything-bagel solution. Many groups would rightly be heard, but the output would be too vague to provide the “how” that justifies a standard, leading to confusion, a stifling of innovation or the standard being ignored. This suggests that most standards should be precise in scope, targeting specific components of AI systems or specific concerns, from certifying the source and history of online content to combating the leaking of confidential data. More precise standards will make it easier to identify a wider range of relevant voices and incorporate their input.

Frontier AI standards should focus on large-scale risks. Historically, standards have accelerated the diffusion of technology, amplifying its benefits but also, in places, its negative impacts. For AI, foresight and risk management standards will be critical to getting ahead of future risks and speeding adoption. But with a technology as general-purpose, fast-improving, and poorly understood as AI, perfect foresight is impossible. Standards move at a human pace and cannot standardize a future that we cannot perfectly see. As a result, the focus should be on developing scientifically robust standards to address the most consequential or large-scale risks, such as those targeted by labs’ Frontier Safety Frameworks.

Wrong paths are inevitable, so we should catch them early. Now and then, technology stumbles into a poor standard, and it’s onerous to go back. But not necessarily impossible. Especially if we act if we catch it early. Consider the U.S. railroads taking action to unify their systems through a mighty coordinated effort. Groups working on AI standards devote much time to building consensus about new initiatives. They should also use the processes available to them to review and withdraw standards, where needed, to avoid sub-optimal lock-in. This also means giving third parties more opportunities to access, understand and constructively critique early AI standards. And designing standards and protocols that are modular, and can be swapped out, or updated, without major downstream consequences.

QUESTIONS FOR YOU

Where do you feel most hope for frontier-AI standards?

Where do you worry about a lack of progress on frontier AI standards?

When you imagine a missing standard for frontier AI, what is that? A technical protocol specified in code? Or a fuzzier process-standard?

Might your standard become politicized? Is it something that hinges on values? Or might most governments in the world support its adoption?

What’s a scenario in which your proposed standard goes awry? How could you detect and mitigate that?

What would be the primary role of government in your standard? Supplying technical expertise? Convening authorities and experts? Incentivizing your standard via public procurement, regulation, or other methods?

Thank you to Shaked Karabelnicoff, Tom Rachman and Bruno Galizzi for support with research and review. As with all pieces you read here, this is written in a personal capacity. All opinions and any mistakes belong to the authors.

Thanks for sharing your research into this topic! To answer Q6: I don't have a perfect answer but Singapore has published the world's first agentic AI governance Framework in January, and is taking a layered approach in its regulations of frontier AI. The Framework works a voluntary guide for now as it actively receives industry/technical feedbacks.

Helped collaborate to create XBRL standards for U.S. GAPP and IFRS a couple of decades ago, and wish we had AI to help, but the key was human cooperation and collaboration. AI can help more people learn more of those skills, too. https://pjwilk.substack.com/p/the-best-preparation-for-ai-is-a?r=7zd0l&utm_medium=ios