By Conor Griffin, Don Wallace, and Theo Brown

For 40 years, amid green pastures outside Culham, a small village in Oxfordshire, scientists and engineers toiled at the Joint European Torus. They were attempting to harness nuclear fusion, a force powerful enough to light the sun.

To create fusion, scientists and engineers must heat the nuclei of very light atoms with such intensity that they fuse, instigating a self-sustaining reaction that releases vast amounts of energy. The scale of the challenge is hard to fathom—at its most extreme, the Joint European Torus, or JET, was the hottest point in the solar system, hitting over 150 million degrees Celsius.

JET concluded in 2023, generating a record amount of energy in its final experiments. The project is now part of fusion’s history but remains pivotal to its future. The growing number of organisations developing fusion reactors are drawing on JET’s discoveries. The UK Atomic Energy Authority is advancing a national fusion facility, MAST-U, on the Culham site. This will serve as a test-bed for STEP Fusion, the UK’s project to put fusion electricity on the grid, set to begin operations in the early 2040s.

But JET didn’t just bequeath novel discoveries. It left behind massive troves of data. That raises a tantalising prospect: Could scientists use this data to train AI models that accelerate the path to fusion power?

This is possible, but challenging. Most JET data is raw and unvalidated. Many important insights are buried in scientists’ logbooks. The data that does exist is not available open source or, generally, for commercial use. Changing this may require agreement from all of JET’s original partners across Europe. One expert we interviewed called JET data a ‘stranded asset’.

Such data predicaments are not specific to JET or to fusion, but apply across all of science, even though science is precisely the domain where AI could yield its greatest benefit to society. New breakthroughs and startups are emerging quickly, from protein design to material design. Scientists are also keen users of fast-improving AI coding agents. But a lack of high-quality data will dampen progress. In most disciplines, large, high-quality datasets like the Protein Data Bank, which underpinned AlphaFold, are absent.

The scientific community needs to tackle this problem, and there are promising signs. Late last year, the UK government launched an AI for Science strategy, which includes a new collaboration with Renaissance Philanthropy to identify priority datasets. The US government’s Genesis Mission aims to train AI models and agents on federal scientific data. Google.org has a dedicated AI for Science fund, which can fund datasets and tooling.

These examples suggest that if the scientific community can identify the data that AI needs, a range of actors could help to fund and deliver it.

This demands what we’re calling AI data stocktakes. The concept is simple: interview leading experts in a given scientific field to understand the main opportunities to apply AI; the data obstacles; and the interventions that could make the biggest difference. Admittedly, some blockages, such as a paucity of engineers, are structural and will take years to fully resolve. AI data stocktakes should identify such challenges, but focus on projects that governments, companies and philanthropies could fund and implement within 1-2 years.

There are promising early efforts to map AI data gaps. But, to our knowledge, there are no concise, accessible documents that explain the AI opportunities in genomics, weather forecasting, and food security and convert them into a list of fundable data projects for policymakers and funders to pursue.1

In this essay, we offer a proof-of-concept. We interviewed 25 leading experts to create an AI data stocktake for fusion. We focus on the UK, but our analysis and recommendations could be taken up by funders anywhere in the world. Moving forward, we hope to support AI data stocktakes for other scientific disciplines and research problems.

I. Why fusion? Why now?

If fusion is achieved, it would provide a safe, almost limitless source of clean energy.2 From a scientific perspective, it would yield a better understanding of the plasma that makes up more than 99% of the visible universe. From a social impact perspective, it would help address energy scarcity and unlock energy-intensive innovations, like desalination.

Despite quips about fusion being always 20 years away, 70 years of experiments actually show fairly steady progress, which has continued in recent years, from Germany to China. In most fields, such progress would have solved the problems of interest decades ago. But fusion is an extremely hard problem. And the primary product is only attainable at the end of the line.

To achieve fusion, scientists need to create and control plasma, a super-hot state of matter, in which the atoms have been stripped of their electrons, and extreme heat and pressure are used to force the remaining nuclei to collide and fuse.

Scientists are pursuing two main approaches to doing this, with very different physics, data, and AI opportunities. Magnetic confinement fusion uses massive magnets, while inertial confinement fusion uses high-energy lasers.3 We focus this data stocktake effort on magnetic confinement, as the UK’s STEP project is pursuing that approach, as is Tokamak Energy, the UK’s leading fusion power startup, and Google DeepMind’s fusion team.

The end of the line for fusion is now getting closer, for two reasons. First, the underlying technology landscape has changed. In addition to AI, the discovery of high-temperature superconducting magnets makes it easier to build smaller and potentially cheaper reactors. Second, fusion has traditionally relied on government funding. But in the past five years, a wave of private investment has arrived, with more than 30 companies now pursuing fusion power.

These shifts have injected welcome momentum into the field, but also significant hype. In response, we need a clear view on the primary bottlenecks that AI can address.

II. How to accelerate fusion with AI

To create fusion, scientists and engineers need to predict, control and understand how plasma behaves. The challenge is that plasmas are highly complex and much of their underlying physics—from fluid dynamics to electromagnetics—remains poorly understood.

To make progress, scientists run experiments that create plasmas in a reactor, and use sensors to measure their properties under different conditions. Scientists use these experiments to validate their theories, reveal unexpected phenomena, and test the hardware needed for power-plant-class devices. However, building fusion reactors is extremely expensive and so few machines exist, with most researchers running their experiments at just ~10 leading facilities worldwide. When they can get access to such a facility, scientists must decide how to design the optimal experiment, including how to toggle an array of possible parameters, from the electrical current in a reactor’s coils to the valves that control the gas levels.

Fusion scientists also run computer simulations, including to help design and interpret these costly experiments. This is also challenging, as researchers must simulate a diverse range of phenomena, at very different scales, from the tiny, lightning-fast movements of electrons to the larger, slower evolution of the entire plasma. For simulations run on massive supercomputers, this may mean weeks. For scientists without such resources, it may mean many months. As a result, scientists make trade-offs, using assumptions and approximations to run their simulations more quickly and cheaply, but also less accurately.

The challenges don’t stop there. Scientists know that their theories, simulations and experiments are imperfect. But when a gap emerges between what a simulation suggests and what an experiment reports, it is often unclear where exactly the issue falls.

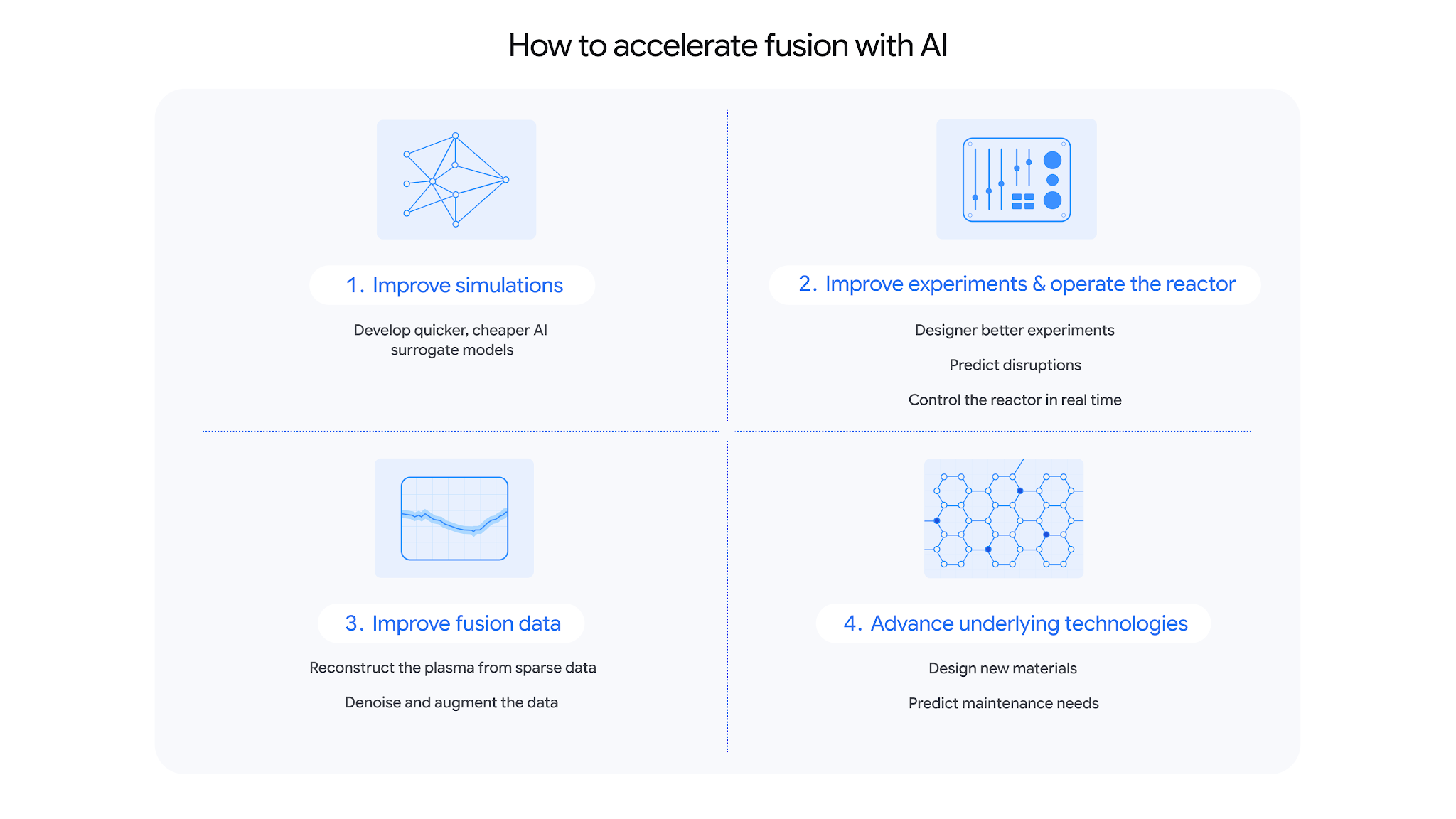

AI can help in four main ways.4

1. Improve simulations

Scientists can develop “AI surrogate” models that emulate the predictions from a fusion simulation code, at a fraction of the cost and time. To do so, they run a code many times, varying the input parameters each time. They then use the resulting dataset to train an AI model to predict the outputs of interest much more quickly.

Scientists have already shown that AI surrogates can make simulations faster. Moving forward, AI surrogates could make simulations more useful. First, scientists could develop AI surrogates for more accurate, but computationally expensive simulation codes. Second, they could develop ‘integrated models’, like TORAX, to stitch together AI surrogates for different phenomena—from the ‘turbulence’ that determines how well confined a plasma is, to the ‘scrape-off’ layer that simulates the plasma hitting the reactor’s wall. Finally, scientists could move beyond producing one-off AI surrogates that result in a paper and some code, to a world where surrogates are documented, maintained and ready for use in fusion reactors.

2. Improve experiments and operate the reactor

In most fusion experiments, scientists must decide if and how to tune various parameters, while striking a balance between more proven and novel settings. To help, researchers can use AI to predict the optimal parameters for their next experiment by learning from past ones; and to predict how well their experiments will fare. More recently, scientists have also started querying LLMs to check and refine their experimental protocols.

Scientists also use AI to predict the plasma ‘disruptions’ that frequently end experiments, damage machines and are one of the biggest obstacles to a future power plant. AI models can already predict past plasma disruptions with high accuracy. But predicting future disruptions, on more powerful machines, quickly enough to stop them, is an open research challenge.

The ultimate goal is to use AI to help operate the reactor itself. Fusion reactors run on a real-time feedback loop: sensors monitor the plasma, while the actuators, such as the magnetic coils, are adjusted accordingly. The traditional control algorithms used to enable this often struggle with the chaotic, non-linear nature of millions of plasma variables interacting.

In recent years, researchers have demonstrated how reinforcement-learning agents can learn more effective control policies, including to reduce plasma disruptions. To help these RL agents generalise to novel scenarios and reactors beyond their training data, scientists are developing ‘hybrid approaches’ that integrate some knowledge of physics into the models.

3. Improve fusion data

Fusion experiments are extreme environments. The intense heat and the chaotic nature of the plasma mean that the data that sensors pick up is often noisy or low quality. Some variables cannot be directly measured, and must be inferred, introducing additional sources of error.

Scientists are training AI models to extract clean signals from this noisy data and to learn correlations that allow them to predict data for one sensor, given data for others—a capability that could be critical if sensors in a future reactor get damaged. Scientists are also using AI to train surrogate models that speed up, and better calibrate, reconstructions of the plasma, using the limited experimental data that is available.

Scientists often care less about the raw data from their experiments, and more about important events, such as when a disruption to the plasma began. Today, they often need to manually inspect graphs and plots to detect these events. AI can help to automate parts of this process and to detect events that scientists may have missed.

4. Improve the underlying technologies

Achieving fusion will require a supply chain rich in technologies that could be applied more broadly. AI could help to accelerate their development.

For example, the chamber walls in a fusion reactor will require new materials that can withstand extreme temperatures. Scientists are training AI surrogate models that speed up the simulations needed to assess a candidate material’s real-world properties, like how strong or resistant to radiation it will be over its lifetime.

A typical fusion reactor also spends much of its time out of operation, at great cost. This makes fusion a logical place to develop predictive maintenance techniques that ingest historical data from sensors and train AI models to learn the subtle signatures that indicate pending breakdowns, allowing practitioners to schedule maintenance or design more reliable systems.

III. The challenges with fusion data

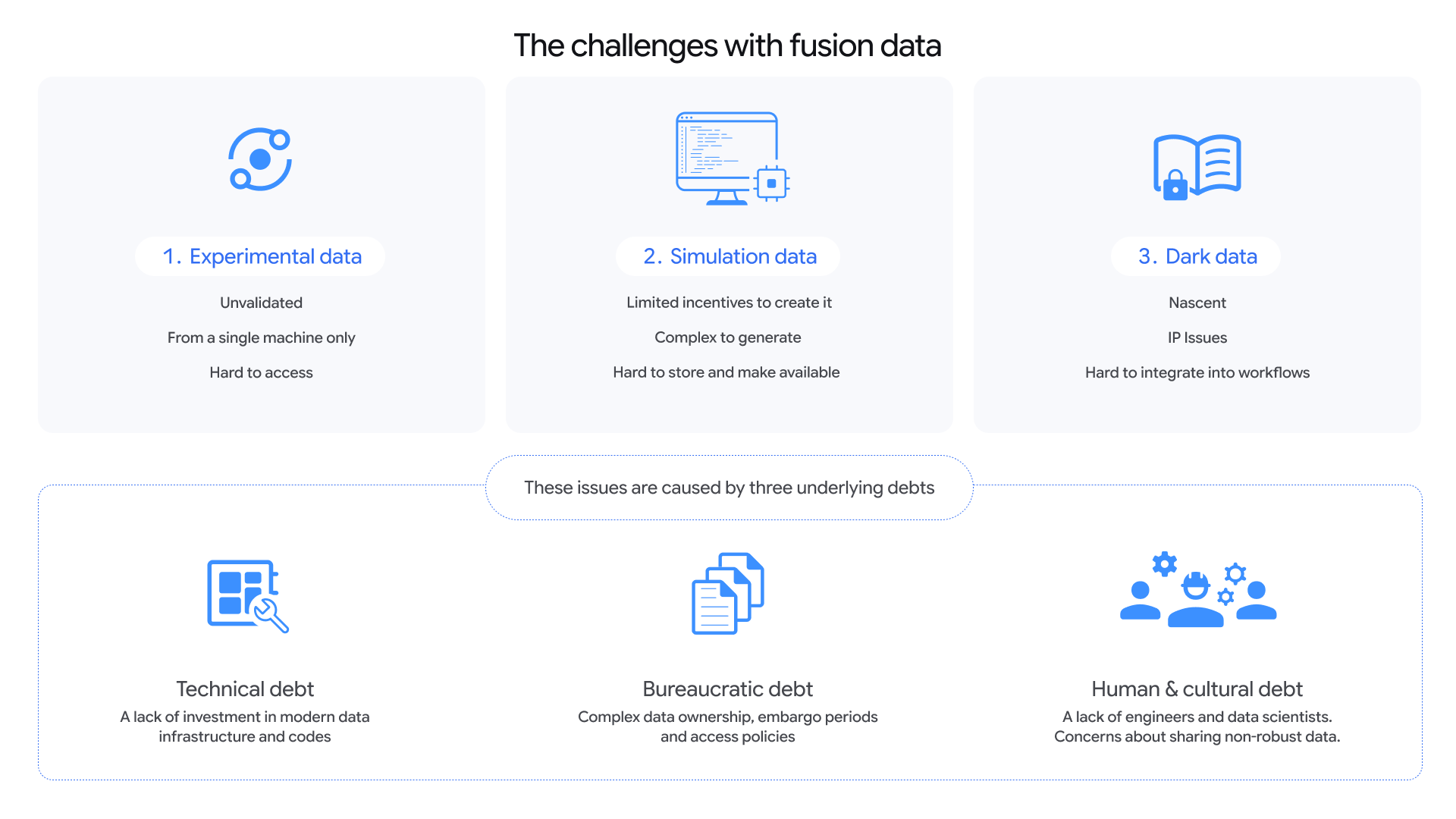

As they pursue these AI opportunities, scientists will need access to three main kinds of fusion data: from experiments, simulations, and sources that are not traditionally available, such as researchers’ logbooks. There are promising efforts underway on this front, but many obstacles.

1. Experimental data: Unvalidated, single-machine and hard to access

Experimental data is the ‘ground truth’ that the sensors in reactors pick up, from line graphs to videos. In magnetic confinement fusion, the challenge is not so much a lack of data, but an excess of raw data that has not gone through the processing needed to make it useful to AI. This processing ranges from addressing noise and imperfections in the underlying sensors, to detecting and annotating important events, such as plasma disruptions.

Currently, the community has to rely on the small well-validated datasets that do exist, which may be as little as a few hundred or thousand experimental ‘shots’—individual test runs of a reactor. The high cost of fusion experiments has also resulted in a natural incentive to pursue experiments that will not fail, curtailing more novel research and meaning that much of the resulting data is in a similar ‘parameter’ space and does not represent the full range of plasma dynamics that scientists want to model.

This experimental data is also not generally available open source or for commercial use. One promising initiative to change this, which several interviewees cited, is UKAEA’s project to open source data from their MAST facility.

However, to develop more general AI models, researchers want multi-machine databases that extend beyond a single facility like MAST. To that end, the IAEA is developing a federated Fusion Data Lake where different institutions would store their data locally but make it accessible via a central data catalog. One challenge with this approach is that fusion facilities have defined fusion variables and stored data in different ways. The Integrated Modelling & Analysis Suite, or IMAS, addresses this by providing a standardised ontology and set of structures for fusion data. It is nascent, but has positive momentum.

2. Simulation data: No incentives, process, or place to host it

In theory, researchers should be able to run fusion simulation codes many times and train AI surrogate models on the resulting data to reproduce the outputs at a fraction of the cost. In practice, most scientists run a simulation to answer a single, narrow, physics question. They do not run a large number of simulations to build representative datasets to train AI surrogates—a very different activity.

That activity is also a hard one. There is no standard procedure to follow to generate a dataset for training an AI surrogate model, and the codes are often finicky to use. Most simulation codes contain ‘free parameters’—knobs that scientists must decide how to best tune—a practice that can be as much an art as a science. The datasets can also be huge and there is no obvious location to store them, although some early examples exist.

3. Dark data: Nascent, IP issues, and hard to integrate into workflows

‘Dark data’ describes the contextual information that scientists generate that is not captured in structured datasets. This includes notes scribbled in experimental logbooks, where scientists describe the procedures they ran, the hardware issues they faced, and the phenomena they observed. For simulations, it includes the many nuances needed to run and interpret a code’s results successfully, and the many undocumented imperfections to be aware of.

Accessing this dark data could help ensure that AI systems do not focus on the wrong things—for example, when an anomaly in the data is caused by an equipment failure or error, rather than a meaningful phenomenon. It could also provide AI with a window into the entire research process, including its many dead-ends, rather than just the final result.

Researchers are using LLMs to try to make dark fusion data accessible, for example by enabling scientists to query experimental logs and archive documents. But much of the data is not well-annotated, there are IP issues in accessing it, and it is not yet clear how to integrate the data into practitioners’ daily workflows.

The three ‘debts’ holding fusion data back

Many of these challenges with fusion data result from three underlying issues, which have compounded over time into systemic debts that inhibit the use of AI today.

1. Technical debt

The fusion community has traditionally had to prioritise getting large, complex machines to work, rather than building infrastructure to collect, curate, and share data. As a result, activities like data annotation and writing high-quality code are underfunded. Many leading fusion codes were created decades ago and have evolved slowly, while the quality of experimental data is limited by the capabilities of the sensors available.

2. Bureaucratic debt

The large costs of fusion experiments and the traditional reliance on government funding mean that many fusion projects have a complex web of owners and collaborators, which can make agreeing on new data initiatives difficult. For example, JET was sponsored and funded by Euratom, the EU’s nuclear research community. Its scientific exploitation was managed by EUROfusion, a pan-European network of fusion research labs. UKAEA managed engineering and operations. Releasing its data may require agreement from all of these actors.

There are other bureaucratic hurdles too. Scientists who run fusion experiments often want an embargo period on the resulting data so that they can prepare a publication. Such embargoes are rational, common in science, and largely supported, but many interviewees felt that they had become too long. Fusion data is also subject to diverging open-source policies. For example, the MAST experiment was funded by UK Research and Innovation, which has strong open data requirements. The follow-up MAST-U experiment is funded by the UK Department for Energy Security and Net Zero, which does not have the same policies. Many fusion companies also do not open source their data.

3. Human and cultural debt

The fusion community does not have enough software engineers and experts who are able to clean data, attach confidence levels, and curate it for AI use. As a result, physicists must take on many tasks that are outside their core areas of expertise, including writing high-quality code.

This issue is compounded by a research culture that inhibits data sharing. Scientists are constantly pushed to move on to the next experimental campaign, rather than to validate older data. This stops some scientists from sharing their data, because they fear that end users will not appreciate the resulting gaps and do bad science with it. Or they fear that they themselves will be criticised for releasing ‘unscientific’ data.

IV. Recommendations

Below we provide eight recommendations to address these data limitations and accelerate fusion with AI. Each project could be led by a mix of government bodies and funders, like the Department for Science, Innovation and Technology and UK Research and Innovation; public research organisations like the UK Atomic Energy Authority, companies; universities; and philanthropies. Where possible, the UK should look to collaborate internationally—for example, with the US Genesis Mission and the International Atomic Energy Agency.

1. Strengthen the UK’s lead in open fusion data

Expand FAIR MAST, the UK’s pioneering open sourcing of experimental data from its MAST facility, by adding data from the follow-up MAST-U facility and making the user interface more accessible. This will require the UK Department for Energy Security and Net Zero clarifying that open data policies apply to MAST-U, funding at least five data engineers over a two-year time period, and ensuring that the project has sustainable compute and data storage.

2. Liberate 40 years of data from the Joint European Torus

Launch a project to open source at least 30% of JET experimental data by 2028. This will require agreement on what data to release. For example, should the project only release validated, curated data relating to notable discoveries? Or should it also release data that is raw, validated only in part, or which relates to ‘normal’ machine behaviour? Second, and much harder, will be securing agreement from all relevant institutions to release the data.

3. Launch a competition to predict plasma disruptions

Fund a competition to see which AI model can best predict future plasma disruptions in new experimental campaigns, building on early examples and work in this space. This could include funding dedicated experimental shots on machines such as MAST-U, to evaluate models on challenging edge cases. Beyond accuracy, sub-competitions could evaluate models on important variables, such as: Can the model make predictions with little data, such as when sensors become damaged?; Can the model predict disruptions across different reactors?; Can the model predict disruptions with sufficient lead time to prevent them?; and Can the model shed new light on why disruptions are occurring?

4. Prototype the future of AI-enabled scientific data curation

Expand the platform that UKAEA recently developed to enable human experts to use AI to annotate experimental data, by adding data from other fusion facilities; increasing the complexity and variety of the metadata that is captured; and training AI models to directly annotate an increasing share of this data.

5. Make leading simulation codes AI-ready

Launch an effort to modernise priority fusion simulation codes, including to make it easier to train AI surrogate models based on them. This could build on early efforts in this space and target codes, such as JINTRAC, which are important to the UK’s proposed STEP Fusion power plant and the international ITER effort. The project could start by modernising the codes’ documentation and ‘refactoring’ them so that they are compatible with modern chips, like GPUs and TPUs, and allow for parallel data generation. It could then open source the codes, with a plan for how to maintain them. Throughout the modernisation process, it could test the usefulness of AI coding tools to the tasks at hand.

6. Demonstrate a new state-of-the-art for AI surrogate models

Fund small teams of software engineers and experts to develop AI surrogate models of important, computationally expensive phenomena in fusion simulations. The project should ensure that all newly created surrogates have state-of-the-art documentation, data provenance and version control. It should release the data used to train and validate the surrogates and develop software pipelines to automate time-intensive aspects, such as organising the data.

7. Use AI agents to preserve expert fusion knowledge for the future

Gather a group of leading experts on a priority fusion simulation code, and equip them to use AI agents to make the tacit knowledge involved in running that code available to the wider research community. To do so, the experts could task the agent with running the code. As it seeks to execute, the agent would have an ‘internal monologue’ that the experts could trace, steer and intervene on. The end result would be a series of documents, such as markdown files, that capture the important dark data needed to run the code well.

8. Create Fusion-Bench to measure and drive LLM performance

Assign leading fusion experts to create an evaluation metric to quantify how well leading large language models understand core fusion concepts. This would make it easier to improve the usefulness of LLMs for downstream tasks in fusion. This evaluation will be more difficult to create than in disciplines like maths or computer science, where it is easier to automatically verify a model’s performance. But the experts could determine the most useful approach, which will likely involve a combination of question-answering and task performance.

V. Six open debates

The experts we interviewed disagreed on some points. Despite the framing below, few are either/or debates. Rather, most are about relative degrees of emphasis.

Incrementalism vs novelty: Should we build on the early AI opportunities that fusion practitioners have already showcased? Or pursue more novel, uncertain AI ideas, such as training general-purpose ‘fusion foundation models’ or using AI ‘world models’ to pursue new kinds of fusion simulations?

The past vs the future: Should we strive to get as much value as possible out of older fusion data, like JET? Or, do the costs mean that we should accept our losses, and focus on making future fusion experiments AI-ready?

Science vs engineering: Are efforts to validate, annotate and standardise data part of an ultimately doomed quest for perfect scientific understanding in fusion? Should we instead use AI to embrace a more engineering-led approach that can get the machines to work with noisy, imperfect, data?

Domestic vs international: Should the UK rejoin ITER, the world’s flagship international fusion collaboration, which it left following Brexit? Or should the UK focus on domestic efforts, perhaps in collaboration with priority partners, like the US and IAEA?

Magnetic vs Alternatives: Should the UK continue to focus on magnetic confinement fusion as the most realistic pathway to a future power plant? Is magnetic also a better bet for AI because it produces much more data and doesn’t have the same associations with the security establishment, which makes data access easier? Or should the UK invest more in inertial confinement and alternative fusion efforts, given the country’s diverse academic expertise, its historically strong relationship with the US National Ignition Facility, and notable assets, such as a world-leading laser?

Public vs Private: Should the UK government try to derive more immediate value from its fusion data? For example, should the UK license some data to companies, to cover the costs of data processing and annotation? If so, should local startups pay less? Or would such efforts hurt the UK’s goal of developing a world-leading fusion sector?

_________________

This essay was originally posted on the Google DeepMind website and is a summary of a 20-page report that contains more details and examples.

Thank you to the following experts who let us interview them, reviewed the draft, and/or provided other support, as well as those who prefer to remain anonymous. All mistakes belong to the authors and no expert spoke to us on behalf of their organisation.

Jonathan Citrin, Brendan Tracey, Cristina Rea, Nathan Cummings, Andrea Murari, Jess Montgomery, George Holt, Alain Becoulet, Matteo Barbarino, Arthur Turrell, Adriano Agnello, David Dickinson, Steven Rose, Alessandro Pau, Kristina Fort, Charles Yang, Federico Felici, Tim Dodwell, Sam Vinko, Aidan Crilly, Lee Margetts, Tom Westgarth, Lorenzo Zanisi, Chris Packard, Justin Wark and Stanislas Pamela.

Fusion has several characteristics that make an AI data stocktake exercise tractable, including a relatively small and centralised research community and early efforts to build on, like the open-source FAIR MAST initiative and the IMAS data standardisation effort. Fields like genomics, weather forecasting, and food security look quite different, and so careful thought is needed on how to best scope AI data stocktakes in these fields. Nevertheless, we think they would be useful.

There are caveats to the claim that fusion power would be essentially limitless, emission-free, and perfectly safe. One of the input fuels, tritium, is not widely available and scientists will need to use nascent ‘blankets’ to breed it from lithium. Certain parts of fusion reactors will become radioactive over time, although they can likely be recycled after ~50 years. Thermonuclear weapons use fusion reactions. However, the weapons first require fission reactions and fissile materials like enriched uranium and plutonium.

Note: There are other approaches to inertial confinement fusion that do not use lasers.

For more in-depth reviews of AI for fusion opportunities, see publications from MIT, the Clean Air Task Force, IAEA, FusionFest, and the US Department of Energy.