Q&A with Ethan Mollick

"People like AI when they use it themselves; they don’t like AI writ large"

How can companies get their employees to use artificial intelligence when human intelligence remains sharp enough to know that this risks replacing jobs? How should education revise itself for the ever-revising technological world that students emerge into? And how to understand the love/hate relationship so many people have with AI?

Ethan Mollick—professor of management at the Wharton School of the University of Pennsylvania and bestselling author of Co-Intelligence: Living and Working with AI—is among the leading public intellectuals commenting on AI adoption, connecting the latest scholarship to real-world usage, including his own tinkering with each new model.

AI Policy Perspectives caught up with Ethan to hear his latest thinking on everything from agentic systems, to why scientific publication is broken, to how workers emotionally relate to AI colleagues. Too much chatter, he argues, considers this transformation at the broadest level. Too little digs into the practicalities of getting it right.

—Tom Rachman, AI Policy Perspectives

[Interview edited and condensed for clarity]

Tom: In your 2024 book Co-Intelligence, you proposed four rules for human and AI collaborations, including that people should oversee and verify AI outputs. But doesn’t the value of AI agents come from people not overseeing and verifying everything?

Ethan: This is where policy matters a lot because these are choices now. In the “co-intelligence era,” you’d prompt the AI to do something in a chatbot, and it would give you an answer. You prompted again, and it’d give you another response. The human was in the loop. And not being in the loop was really dumb because it meant that you were just pasting in the AI’s answer, and then you’d get in trouble, as a lawyer with the judge, or whatever it was. Capabilities were weak, so human-in-the-loop mattered a lot.

But with agentic systems that could do hours of work on their own, now it’s a design choice. When do we want humans-in-the-loop? When is human verification valuable? When is human verification morally required? When is it legally required? What kind of interventions move the system forward? I feel there has been a complete lack of deep understanding about these topics.

Tom: You’ve said that, with agentic systems, management becomes a superpower. Can you explain this?

Ethan: Increasingly, systems look like mini-organizations as they get subagents they can delegate to. So the best way to organize is to give the AI a clear direction of where you want to go. And it turns out that this looks a lot like management. When do you want the AI to check in with you? How do you write a really clear brief? What checks are important? What tests do you want to run? What’s acceptable? What’s not acceptable? Those are management questions.

THE WORKPLACE

Tom: You co-wrote a study last year involving a field experiment at Procter & Gamble that showed AI usage enhanced employee performance. But there were other interesting findings besides that.

Ethan: The most interesting piece about it was that people liked working with the AI, and that it substituted for people emotionally. The second interesting piece was the “smoothing” of capabilities—so, technical people previously had technical ideas while business people had business ideas. But AI smooths out both. If technical people can do business work and business people do technical work, what that tells you is we have to redesign organizations.

Tom: The emotional side—that using AI improved people’s feelings about the work—was surprising to me; I wasn’t sure what to make of it.

Ethan: What to make of it? That views of AI are complicated. If people keep saying, “Yeah, AI is going to destroy all jobs, and may kill everyone on Earth…but might not”—and then, “Why is AI unpopular?!” Feels like not a hard question. People like AI when they use it themselves; they don’t like AI writ large. It’s not surprising to me that AI makes your job better because a lot of jobs suck! And if we do good design work with AI, it makes people’s lives better. If we just let it loose on the world, and tell management that the only option they have is automation, then we’re in big trouble.

Tom: Many knowledge workers seem to be using AI in secret right now, perhaps from fear of being exposed as less valuable.

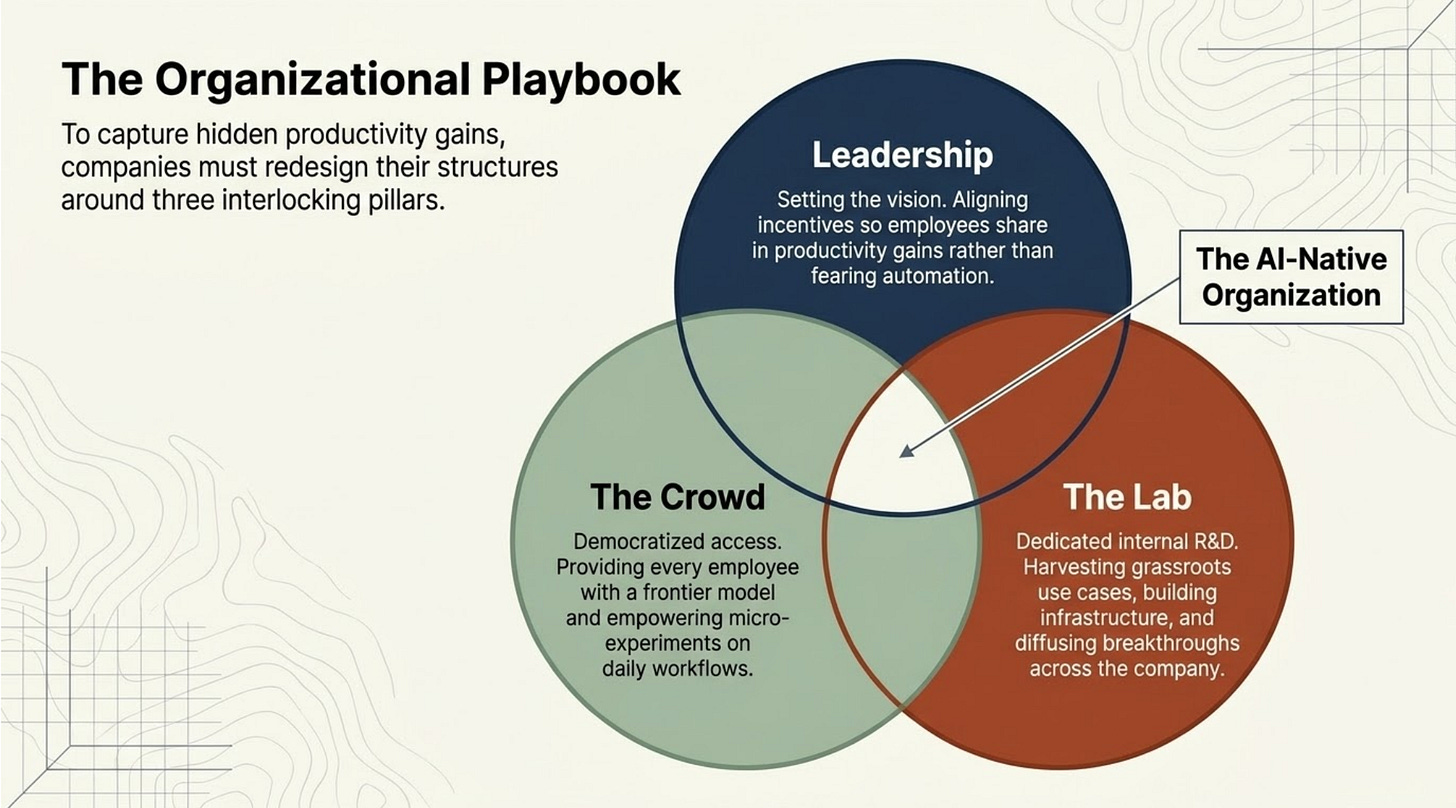

Ethan: This is a leadership problem. The incentives have to be aligned properly. Currently, it’s, “I’m going to automate your jobs away” or “I’m not going to share with you any of the gains the company gets.” People are exquisitely tuned to rewards. So it’s about leaders articulating a vision of what the world looks like with AI for employees. “What should I expect to do? How are people rewarded for doing the right thing? If they automate 90 percent of my job, what happens to me?” Without those answers, everything else is secondary.

CHANGING ORGANIZATIONS & EDUCATION

Tom: You have a concept of “leadership, lab, and crowd.” Could you explain?

Ethan: There was a huge amount of R&D in the 1900s about how you organize work, and 40 percent of the American advantage in business came from management. In the last 30 years, a lot of that muscle has died. But experimentation is important, and leaders need to guide that. So, there are three things that organizations need to be successful with AI. First is “leadership”: a team that articulates a clear vision of the future, and is willing to experiment. Then there’s “the crowd,” the employees who might actually use AI. They need access to a frontier model, they need clear rules, they need reward systems. Then there is “the lab,” and this is the piece a lot of companies are missing. You need a dedicated team working on AI innovation. They can’t be just a technical team; this is not an IT department problem. If you don’t have that piece, you’re not building things for the future. And where does the crowd go when they have a good idea? “I came with a breakthrough idea that saves 90 percent of effort!” How does that diffuse in the organization? That’s where you need the lab.

Tom: If AI transforms the workplace, that should change how we educate the next generation, right?

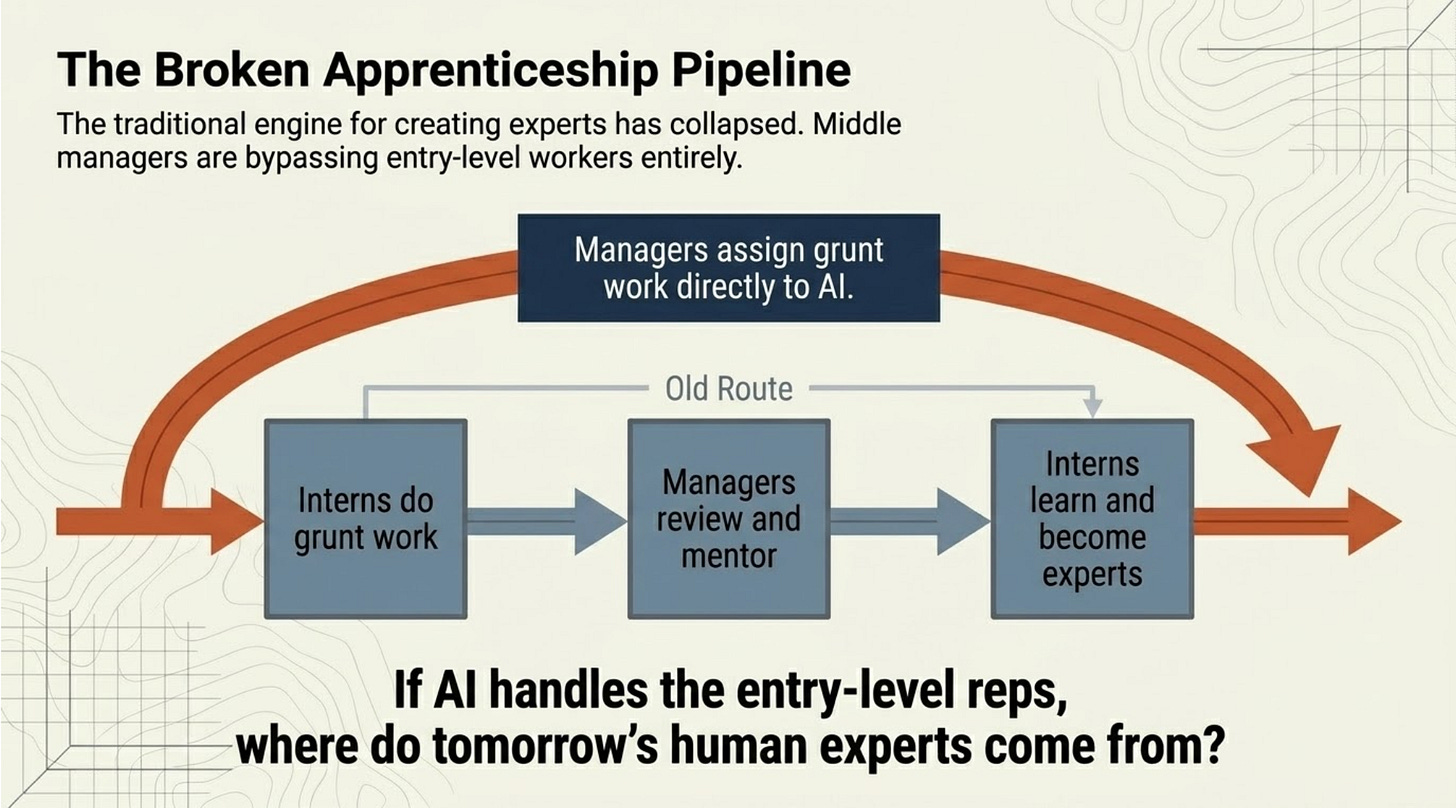

Ethan: The early workplace is under a lot of threat because the old apprenticeship model just broke. The idea was that there were tasks—especially in white-collar work—that were tedious and annoying for managers to do. But you could pay a relatively cheap person to do them, and that person would learn as a result of this, and receive mentorship. So we had this amazing machine for talent: we taught you, we evaluated you, and you got paid, and you were doing work we needed. A junior person’s goal was to produce good work that made managers happy, so that they got promoted. But now the junior person is worse than AI, so they’ll use AI to do their work. And the middle manager’s goal was to give work to a junior person who’s not great, and give them feedback so they get better, so that the middle manager has to do less work. And that broke because the middle manager would rather assign work to the AI.

Tom: But in terms of the educational system, what should change if workplaces no longer offer that apprenticeship role?

Ethan: Education is really screwed up right now, but it was screwed up for lots of reasons. It’ll be fine; we’ll figure this out. But it’s gonna take a bunch of years. It’s clear from early evidence that AI will be a tutor outside of class and inside class. It’ll do activities and give guidance. But schools are places where we can compel students to not use AI, and have them in a room, and evaluate them, and teach them the things that we want them to learn. As long as we think people need to be educated, this is the best space to do it in. So students are cheating in the meantime? They were cheating before! We can give them different tests; we could do in-class writing assignments. There can be a weird, backward-looking “Education won’t adjust!” view. How many death spirals does higher education need to be in per moment? There are the pieces to reconstruct a better form of education. It’s just a massive changeover.

BETTER SCIENCE & BETTER THINKING

Tom: What about academia? There’s been much talk about AI-written papers, and how they could overwhelm academic publishing. But could AI benefit the peer-review process, and help with the dissemination of academic findings?

Ethan: This is another area where more lifting is needed. It’s a shame that we are building AI co-scientists, but not thinking about the rest of the process that’s needed to actually make science happen. It’s one thing to have science produce more papers. We have no ability to absorb more papers. Every publication is overwhelmed. Our dissemination techniques were already bad, but now they’re really broken.

Tom: As a case in point, you submitted a paper around 2023, and wrote publicly about it then, making your term “the jagged frontier”—that AI capabilities advance in some areas but remain behind in others—highly influential. Yet the academic paper itself only just came out, three years later!

Ethan: One of the rejections we got early on was reviewers saying that they knew this already, and they cited a bunch of working papers—that cited the working paper we had submitted! This is not a unique story. Opening one part of the bottleneck without opening the others becomes a problem. But it takes longer to solve systemic problems of how science operates than to solve the problem of producing more papers.

Tom: Another concern in education and science is cognitive offloading, that people may surrender thinking to machines, and lose those skills. On the other hand, AI’s value comes from machines thinking for us. What are examples of bad offloading and good offloading?

Ethan: We offload all the time, right? But we also force people not to offload. You could offload all your mental math to calculators, but we force students to do some math by hand in an attempt to get them to learn stuff. And we can enforce those rules in school. In the world of work, we are not used to thinking about training, about what should be offloaded, and what shouldn’t be. We need to make decisions about this. So, Rolls-Royce still employs someone to paint stripes on a car by hand, and that’s an obvious pushback against deskilling in one area. But Ford doesn’t do the same thing. These are choices we get to make at an organizational level, depending on what we think is valuable.

ADAPTING TO CONSTANT CHANGE

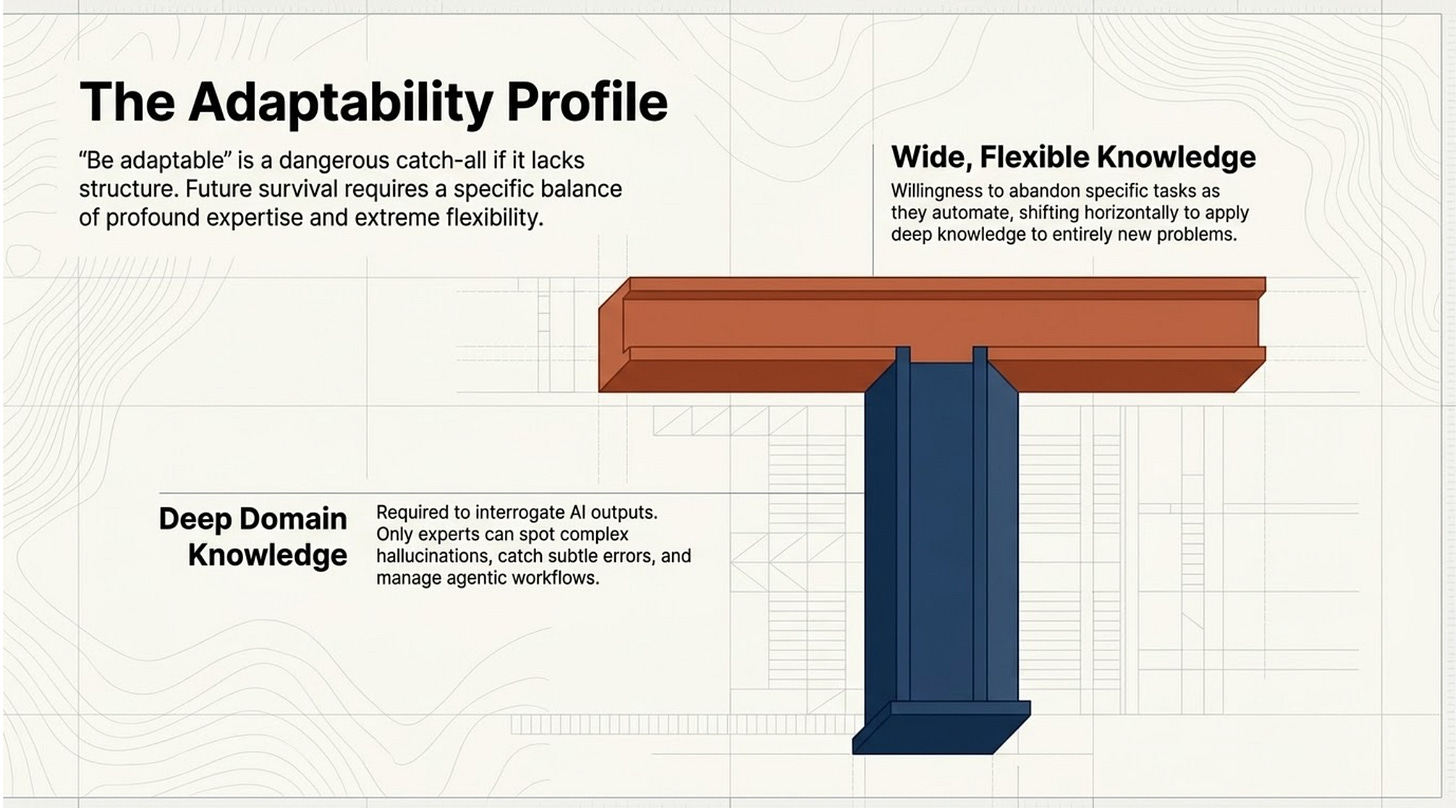

Tom: A point you’ve made to young people about the AI future is that they’ll need to be adaptable. When educators talk about teaching adaptability, it sometimes boils down to encouraging “creativity” and “critical thinking.” Another view is that you’re more likely to be adaptable by developing deep domain knowledge. For you, what does learning adaptability mean?

Ethan: Adaptability requires both deep domain knowledge and wide knowledge: T-shaped behaviour is probably the way to go. I feel like it’s a throwaway line: “Well, we’ll all be adaptable!” If we could teach that, that’d be amazing. People are more adaptable than we think, so part of this is that people will figure stuff out. But we can’t just throw up our hands, and say, “Be adaptable!” You need to have deep enough knowledge to go into a field. You need to have broad enough knowledge so that, as one piece of knowledge becomes less useful, you’re moving to the next one. And we need to help people be adaptable by building systems that get them in place inside an organization and able to shift roles. I sometimes worry that adaptability is a catch-all for “Don’t worry! It’ll be fine!”

Tom: Another side is that not everybody will be equally adaptable. Could it be that the AI future favours certain circumstances and characteristics?

Ethan: A lot of these characteristics were already good characteristics to have. Does AI act as a multiplier of them? Does it disincentivize some people? We’re now past the edge of what we know. Ultimately, all of these questions come down to the same exact question, which is: How good does AI get, how fast? We need to articulate more clearly what we think that future looks like. Because you can’t say, “We’re going to build a superintelligent machine that’s better than all humans at every intellectual task—but let’s start thinking about adaptability!” Unless you mean, “Let’s adapt to UBI” [where everyone gets Universal Basic Income cash payments from the government]. And then, we should be spending a lot more time thinking about those issues. Not everyone in the labs believes this, and I find that the econ people believe it less. But you can’t have this message of, like, “All work will be obsolete!” and then have detailed, ticky-tacky conversations about what you should do in eighth grade. Because, by the time you enter the job market, there’s no jobs. So give me the pathway that you think is there, and that becomes the most important question to ask.

Tom: Are there other important questions I didn’t ask?

Ethan: We need to start thinking about getting into fields, and understanding what the changes are—we need to get detailed. That is where the research is missing. Another large-scale econ picture about AGI isn’t as useful. General-purpose technology affects everything, so we need policymaking for everything, from power generation to accountants, and when does the government say it’s okay to do this. There’s just this assumption that if we do the macro stuff, everything will work out. I’d rather see a lot more micro stuff: a thousand flowers everywhere, trying to come up with different approaches.

fyi. The AI that knows

vs.

The AI that believes

https://leebloomquist.substack.com/p/the-ai-that-knows-vs-the-ai-that?utm_campaign=post-expanded-share&utm_medium=web